Streaming Data on Vercel

Learn how Vercel enables streaming Function responses over time for e-commerce, AI, and more.Vercel Functions support streaming responses, allowing you to render parts of the UI as they become ready. This lets users interact with your app before the entire page finishes loading by populating the most important components first. Common use-cases include:

- Ecommerce: Render the most important product and account data early, letting customers shop sooner

- AI applications: Streaming responses from AIs powered by LLMs lets you display response text as it arrives rather than waiting for the full result

Vercel enables you to use the standard Web Streams API in your functions. To start immediately, deploy a streaming template:

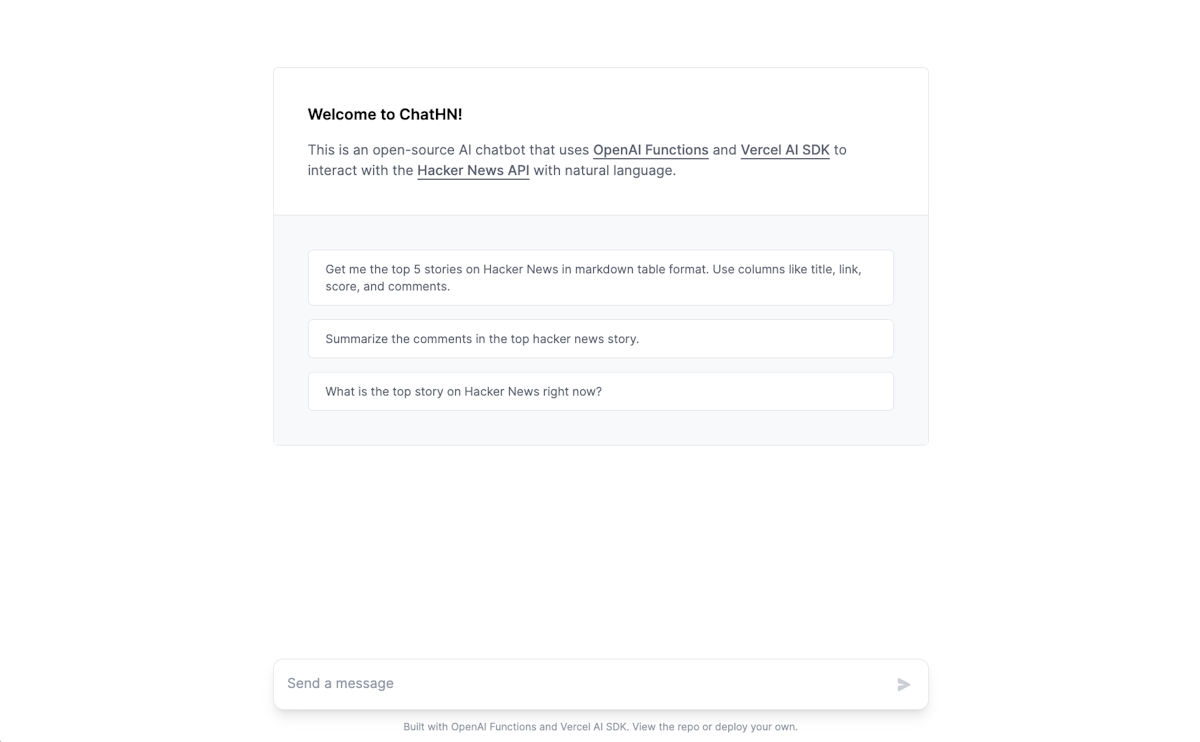

ChatHN – Chat with Hacker News

AI chatbot that uses OpenAI Functions and Vercel AI SDK to interact with the Hacker News API with natural language.

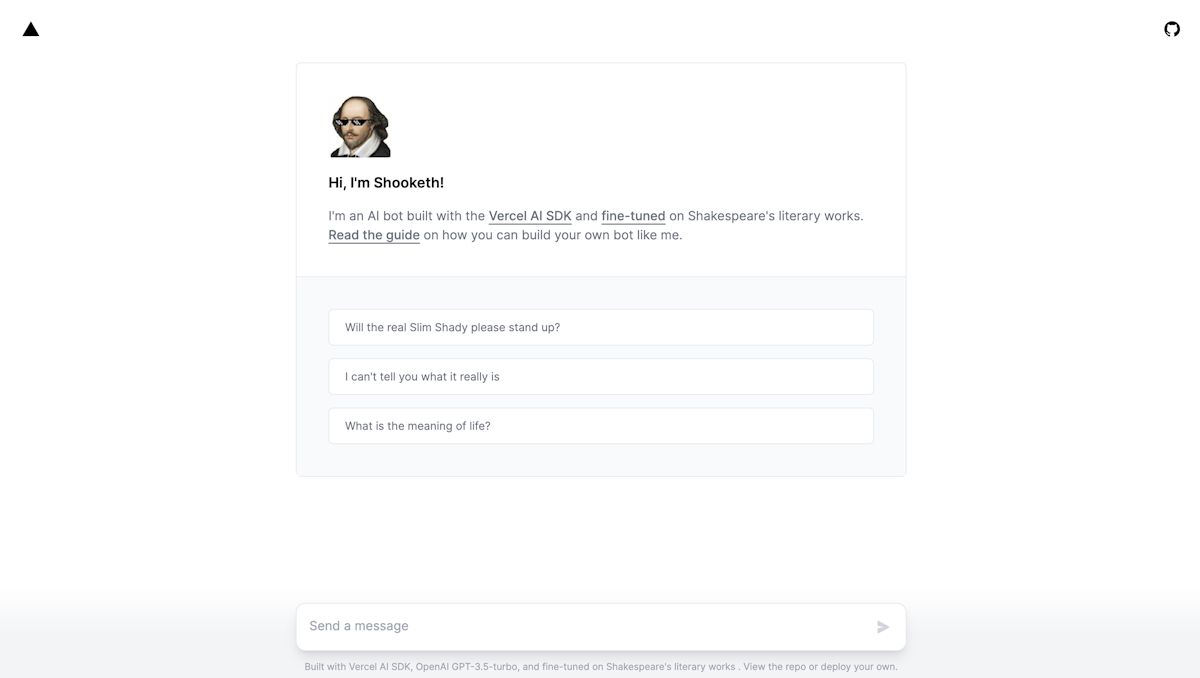

Shooketh – AI bot fine-tuned on Shakespeare

An AI bot built with the Vercel AI SDK, OpenAI gpt-3.5-turbo, and fine-tuned on Shakespeare's literary works

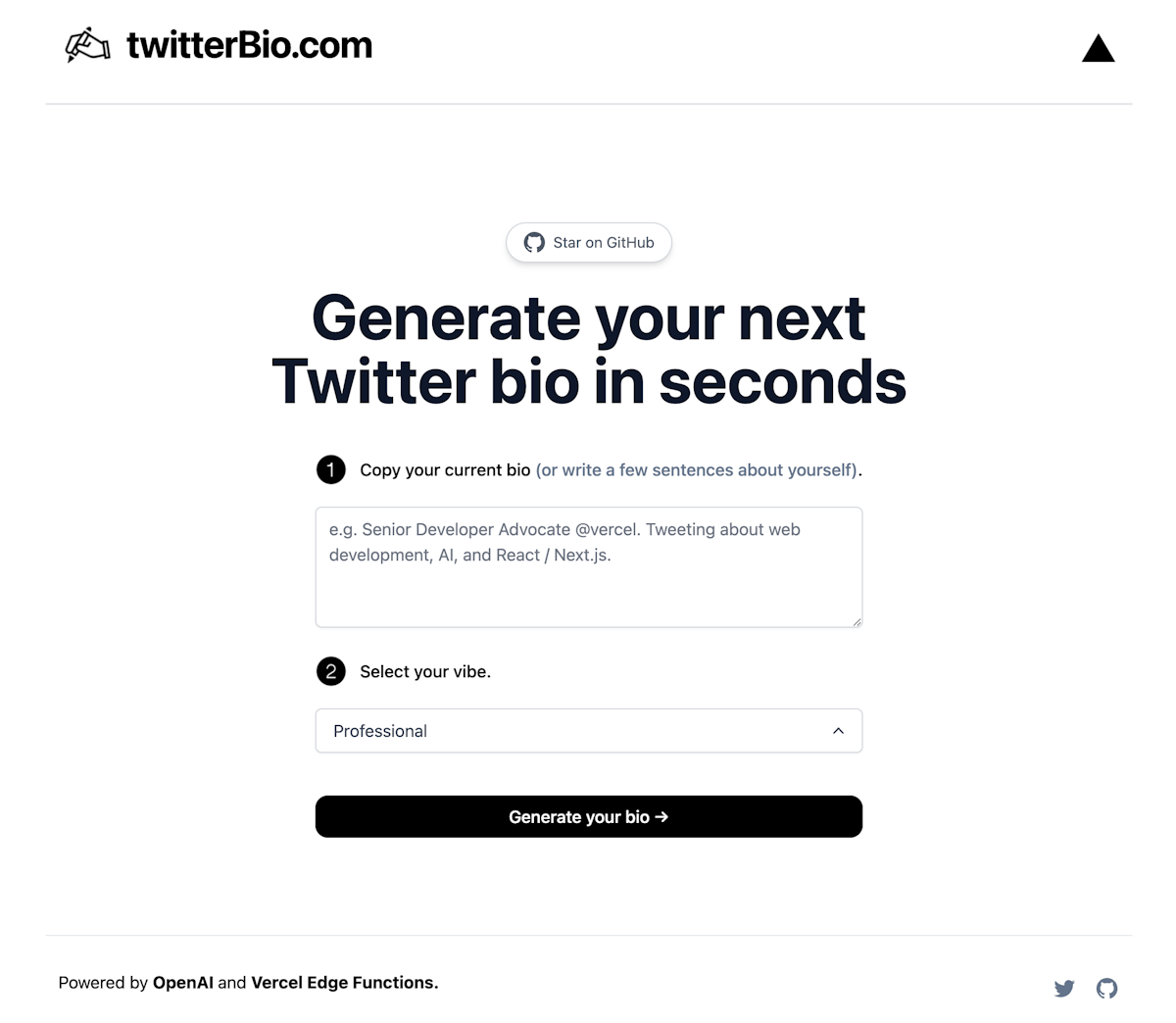

AI Twitter Bio Generator

Generate your Twitter bio with OpenAI GPT-3 API (text-davinci-003) and Vercel Edge Functions with streaming.

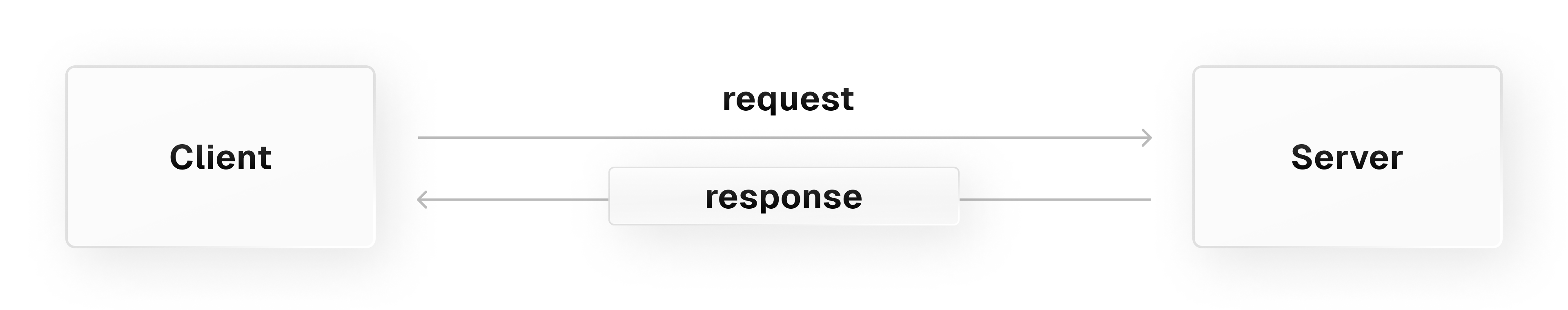

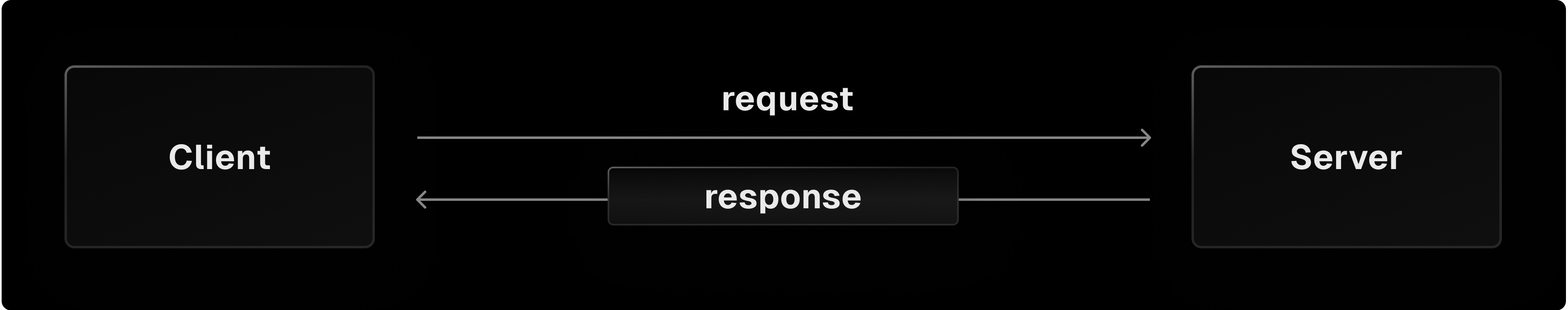

HTTP responses typically send the entire payload to the client all at once. This approach can sometimes result in a slow user experience if the data is large or computationally intense.

The Web Streams API enables you to stream chunks of the payload as they become available, improving your users' perception of how fast data is loading. It is supported in most major web browsers and popular runtimes, such as Node.js and Deno.

The Web Streams API helps you:

- Break large data into chunks: Chunks are portions of data sent over time

- Handle backpressure: Backpressure occurs when chunks are streamed from the server faster than they can be processed in the client, causing a backup of data

- Build more responsive apps: Rendering your UI progressively as data chunks arrive can improve your users' perception of your app's performance

Chunks in web streams are fundamental data units that can be of many different types depending on the content, such as String for text or Uint8Array for binary files. Standard Function responses contain full payloads of data, while chunks are pieces of the payload that get streamed to the client as they're available.

For example, imagine you want to create an AI chat app that uses a Large Language Model to generate replies. Due to their large data sets, replies from language models can generate slowly.

Standard Function responses require you send the full reply to the client when it's done, but streaming enables you to show each word of the reply as the model generates it, improving users' perception of your chat app's speed.

Chunk sizes can be out of your control, so it's important that your code can handle chunks of any size. Chunks sizes are influenced by the following factors:

- Data source: Sometimes the original data is already broken up. For example, OpenAI's language models produce responses in tokens, or chunks of words

- Stream implementation: The server could be configured to stream small chunks quickly or large chunks at a lower pace

- Network: Factors like a network's Maximum Transmission Unit setting, or its geographical distance from the client, can cause chunk fragmentation and limit chunk size

- In local development, chunk sizes won't be impacted by network conditions, as no network transmission is happening

For an example Function that processes chunks, see Streaming Examples.

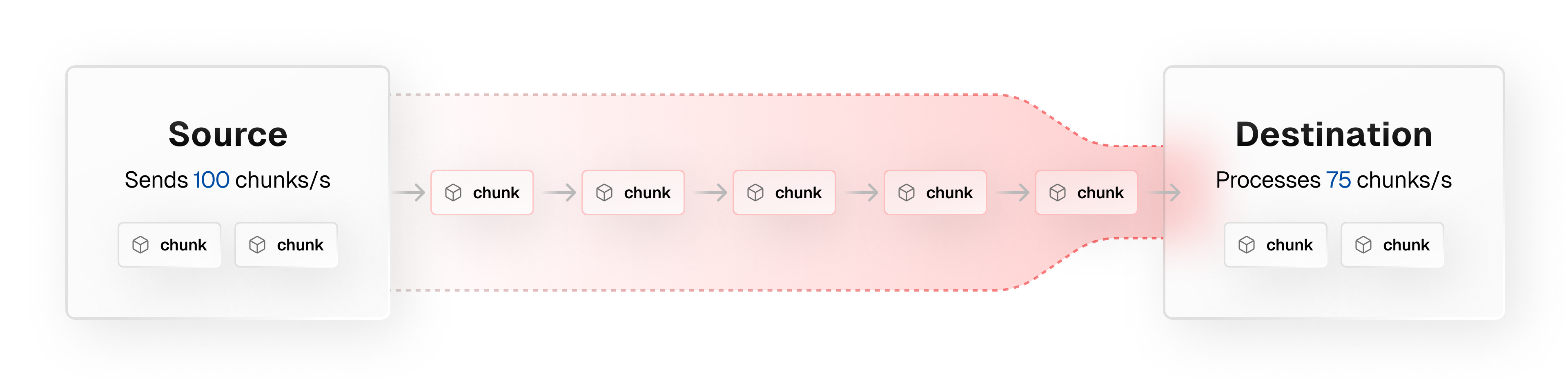

Once you understand how to deal with chunks of different sizes, you must understand how to deal with chunks arriving faster than you can process them in the client.

When the server streams data faster than the client can process it, excess data will queue up in the client's memory. This issue is called backpressure, and it can lead to memory overflow errors, or data loss when the client's memory reaches capacity.

For example, popular social media users can receive hundreds of notifications streamed to their web client per second. If their web client can't render the notifications fast enough, some may be lost, or the client may crash if its memory overflows.

You can handle backpressure with a technique called flow control. This technique manages data transfer rates between two nodes to avoid overwhelming a slow receiver.

For an example of how to handle backpressure, see Streaming Examples.

- To get started streaming on Vercel, see Streaming Quickstart

- For more detailed code, see Streaming Examples

Some frameworks, like Next.js and SvelteKit, have built-in functionality for streaming UI components. Doing so allows you to specify components that should be rendered with streaming data without needing a Function.

See the following docs to learn more about streaming UI components with your preferred framework:

Most, but not all, functions allow you to stream responses. Streaming functions can be used in the following contexts:

- Functions using the

edgeruntime- These functions must beging sending a response within 25 seconds. After the initial response begins, you can continuously stream the response with no time limit

- Your streamed response size cannot exceed Vercel's memory allocation limit of 128 MB

- Node.js Functions when using the following frameworks:

- Next.js App Router

- SvelteKit

- Remix

- Solid Start

- Node.js functions using the Web Handler signature:

app/api/hello/route.ts

export const dynamic = 'force-dynamic'; // static by default, unless reading the request export function GET(request: Request) { return new Response(`Hello from ${process.env.VERCEL_REGION}`); } - Node.js functions using the Node.js Handler signature and the

supportsResponseStreaming:trueflag:app/api/hello/route.ts//Next.js App Router will always support streaming with Route Handlers export function GET(request: Request) { return new Response(`Hello from ${process.env.VERCEL_REGION}`); }

You should consider configuring the default maximum duration for Node.js functions to enable streaming responses for longer than the default 10 seconds on Hobby and 15 seconds on Pro.

- Node.js runtime functions using the Node.js handler without

supportsResponseStreaming:true - Node.js runtime functions using Next.js Pages router

- Note that even if the rest of your app uses Next.js Pages Router, you can use route handlers in the App Router to stream responses. Follow the App Router examples to do this

//Next.js App Router will always support streaming with Route HandlersWas this helpful?